Link building is a controversial subject. On one hand, you can use spam link building as a black hat technique to churn and burn sites and rank money sites. Sure, they disappear eventually, but you keep moving to keep ahead of the penalties.

On the other hand, you can carefully craft an outreach campaign to maximize your beneficial links, minimize your harmful links, and in general build a brand and reputation. It’s slow, it’s methodical, and it works very well after a while.

I’ve compiled and provided for you a list of tools and methods you can use to get more backlinks. Most of these will either require you to use proxy servers to get around rate limits for harvesting data, or will benefit enough from using proxies that it’s better to implement them with than without. It is, of course, up to you which techniques you’re comfortable with using.

Please note that many of the techniques and applications on this list can be considered black hat or gray hat depending on context.

Misusing them can earn you a search penalty, particularly since Google has decided to consider all non-content-focused link building as a low-level gray hat operation .

Software Options

Software can be immensely valuable for saving time and energy on data harvesting and submission.

However, it can also be a vector for spam and low quality link building.

The tools I’ve listed below have a wide range of uses, some of them spammier than others. As such, I haven’t linked to the applications themselves. If you can’t locate them based on the name, well, you shouldn’t be straying so close to the metaphorical sun in the first place.

Always exercise caution when using any software application to replace manual effort. I recommend using high-quality proxies in a rotating list so you don’t get your core IP banned or earn a spammer label.

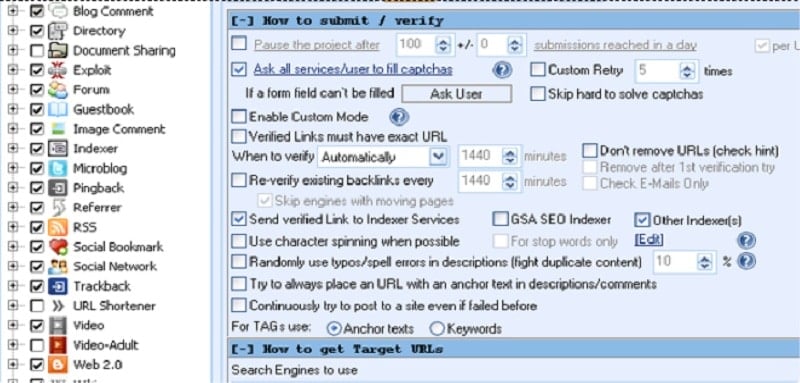

GSA Search Engine Ranker

One of the most high profile black hat link submission engines around right now, GSA is potent because it allows you to set your own rules and doesn’t operate off its own database of sites. That makes it dynamic, and insures you don’t have to keep updating the program to keep it relevant.

It also has no submission limit, though again, too many submissions too quickly will flag you in ways you don’t want to be flagged.

You may be like, Which Proxies Should I Use for GSA SER (Search Engine Ranker)?

Scrapebox

One of the more generally useful apps in a non-black hat way, Scrapebox allows you to harvest all sorts of data in a dozen different ways. However, because it’s a data harvesting robot, you really need to make use of proxies to protect yourself from rate limits and IP blocks.

Read more: Why the Harvester on Your ScrapeBox Isn’t Working.

In addition to data harvesting, Scrapebox includes specific promotion methods categorized as either black or white hat, so you know what you’re getting into when you use it.

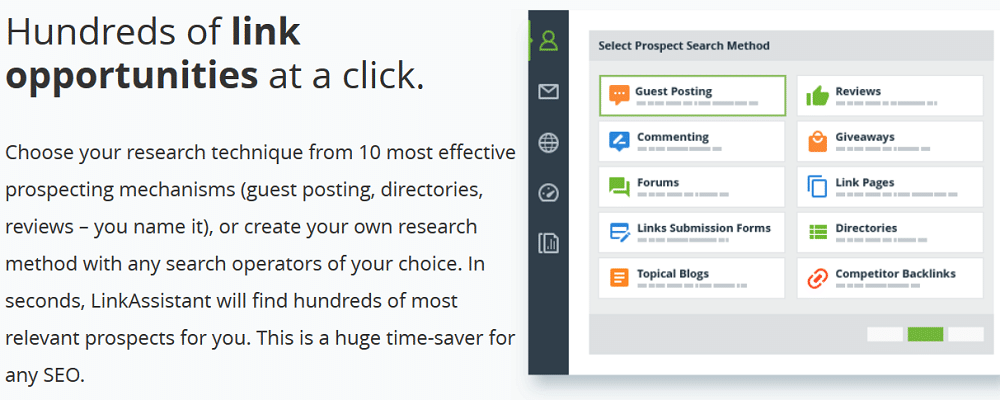

Link Assistant

When it finds these sites, it builds a list and figures out how you can submit links to them, be it through contact messages, blog comments, or other contact methods. The app also stores more data than just a backlink for submission, so your links are surrounded by relevant and useful information.

Read more: Why SEO Proxies & How to find Proxies to use With SEO software

SEO SpyGlass

This app does more than just find backlink opportunities; it uses its own internal criteria to determine just how useful those backlinks might be.

You can set it to specifically only target high-quality links, to avoid all of the spam and low quality links, if you prefer a more careful and curated route. It does this via checking domain rank, Alexa, PageRank, anchor text and social signals, among other things.

SEO Link Robot

A program with both content creation and publication features. The promotion includes social bookmark site submission, RSS submission, and curation in article databases. Content creation – not recommended – includes article spinning, posting, and so forth. It includes a captcha breaker, and some link pyramid structure settings. Uses its own database of relevant submission sites. On the other hand, it’s a little slow to use.

Money Robot Submitter

A heavy-duty automated link submission engine that has a large database of sites to submit to. These range from blogs and blog comment sections to social networks, social bookmarking sites, and forums. It also includes Wiki and various wikia sites, and articles where you can insert yourself as a relevant reference. It’s all focused on speed over outreach, though, so be wary about spam.

GScraper

This is another of the potent data harvesting tools for both white and black hat uses. It’s a very fast, very accurate Google scraper, but it absolutely requires a rotating list of proxies unless you want to be blocked for hours at a time on a regular basis. Seriously, if you have enough proxies behind it, you can scrape a million URLs in the space of 10-15 minutes. Plus it has built-in rules you can use to clean up and sort the data you harvest without having to futz with a CSV manually.

EDU Backlinks Builder

This is a smaller scale tool meant for automatically building high quality backlinks specifically from EDU domains. EDU domains do tend to have more value for Google because they’re hard to acquire and difficult to spam.

Links on them are then considered more valuable, which makes tools like this spring up to get them. Use sparingly,otherwise the abuse will be identified and you’ll lose the value you had gained.

LinkSearching

This is a web-based tool that you can use for free, which is great, because some of the tools on this list are quite expensive.

This one doesn’t really automate submission, but it is a robust scraper engine to identify relevant sites and look for link opportunities that both appear natural and pass a good deal of value. It does, however, include a database of link footprints you can use as templates to customize the anchors and submissions you do make.

BuzzStream

This is another web app that is designed as a prospect research and contact information harvesting engine. You can use it to find all the various places you might be able to submit your content, or even just comments and links.

You can then rank these sites according to your own value metrics, and send out personalized templated emails to perform some outreach. The only way it could be considered black hat is the intensity of the scraping, which can earn you a bit of a time out from Google’s web search. Proxies help here.

Integrity

Integrity and Scrutiny are two related apps you can get for various prices. Integrity for free checks links and allows you to export data on them. The plus version gives you multisite checking, searching, filtering, and XML sitemap features.

Scrutiny is an upgraded version that includes SEO checks, site monitoring, orphan page detection, and some other SEO factor optimization. You can also get online versions of the apps for a monthly fee rather than buying the stand-alone program once.

Link Bird

This service aims to replace your need for Excel or another spreadsheet manager in your outreach and link building process. It allows you to import data and manages it with a robust set of dashboards, complete with various link building tools. You have a link manager, a rank tracker, keyword research tools, brand monitoring and a lot more.

Kerboo

Formerly known as LinkRisk, this tool is a fairly expensive suite that will cost you around $150 per month. However, for that, you get a wide range of tools and abilities.

Features include a backlink profile auditor, a site information harvester, link and mention monitors, visual analytics, ranking harvesting and monitoring, and an API to hook all of that into a different app you use.

LinkVana

This is less of a tool and more of a hybrid tool and service. In addition to being a content manager and analytics system, it includes services by real human content marketers, who perform human outreach and manual link building using modern acceptable standards.

They form relationships for you so you can focus on creating the high quality content necessary to fuel those relationships.

Ultimate Demon

This is a content submission engine with a huge selection of sites you can send your posts to, automatically. It supposed multi-threading, which is good for mass submissions. It also allows you to customize what sites you submit to with scripts, so you can set it to do exactly what you want. It does include article spinning, but again, it’s not recommended that you use it unless you’re really trying for nothing more than a churn and burn.

WhoLinksToMe

The name implies that this is a site that will pull your backlink profile for you, and it will. However, its best use is stalking your competition.

You can point it at any site you want and it will scour the net for as complete as possible a report on their SEO activities. You can see what those sites are doing, where they’re doing it, how they’re doing it, and how you can supplant them with your own strategies.

Ontolo

This is a broad-scale analysis app that helps you out with SEO and link building. It can analyze over 4,000 URLs a second, which is an insane number, and virtually requires proxy use to avoid eating a rate limiter. It’s designed for enterprise-level analysis, but mid-sized businesses can sometimes use it as well. Unfortunately, it’s overkill for small businesses in almost every instance.

Outreach

Outreach options tend to be more valuable, because they’re more focused on building relationships and producing high quality content; things Google is very interested in seeing you do.

On the other hand, sometimes you need to stray into gray hat techniques in order to produce your white hat content. That’s where proxies come in; harvesting data is perfectly legitimate, but doing it too quickly with software will result in bot flags and IP bans. If you’re harvesting data or performing mass outreach, make sure to protect yourself.

Scraping Emails for Outreach

By using a data scraper you can harvest the contact information from the about page of a wide range of sites. If the sites don’t have an about page, or they don’t have an outreach email listed, you can always try a sneakier route and scrape their Whois data to find the contact information for the domain owner. Sometimes you will have to cross-reference with Facebook to find the right instance, though. Still, you can find some personal connections this way when those people don’t have open emails.

Scraping Web Search for Contact Pages

This is another form of contact scraping you can do, generally just by using a tool like Gscraper to find sites that use a keyword you specify and a URL or page title that includes the phrase “contact us.” This will get you a list of relevant sites you can submit links, tips, or messages to in hopes of a link. It’s up to you to figure out how to get those people to accept your messages, though.

Scraping Write for Us Pages

This is the same thing as the previous one, except looking for pages with “contribute” or “write for us” pages. You can use these pages to give you guest posting opportunities. If you want to go black hat, an article spinner works. If you prefer white hat, make some good high quality content, preferably based on the sorts of subjects that do well on your target site.

Harvesting an Influencer Database

The first step in influencer outreach is gathering a large list of influencers to target. You need to rotate through this list so you’re able to spread out your outreach; it doesn’t work if you hit up the same person three times a month. Make everyone you contact feel special even if they’re one of hundreds you touch bases with.

Scraping for Broken Links

Scraping Google or specific sites for broken links is a good way to implement some broken link building. Identify content that no longer exists and that you can replace, and scrape looking for links to that content. Proxies help as usual by avoiding bans and rate limits, giving you a faster list so you can act sooner.

Searching for Paid Guest Post Opportunities

This is very similar to the write for us tip, but it’s also a way to make a little money on the side. A lot of high profile blogs will pay for a guest post, assuming that guest post is of sufficient quality. This is not a technique you can use with an article spinner! Anyone paying for a guest post is going to be looking it over pretty carefully before paying you, so make sure you’re submitting high quality content.

Stalking Competitor Outreach

There are a lot of tools, including a couple listed above, that allow you to look up the SEO strategies and link sources your competitors are using. Ideally, you will be able to find sites that link to your competitors, and supplant their links. If not, you can still try to get your own links from those sites, to do what they do plus your own benefits to outstrip them.

Scraping Brand Mentions

Brand mentions are implied links, and while Google has talked about giving value to implied links in the past, they haven’t really done it just yet. So, in the mean time, what you can do is scrape any and all mentions of your brand. You can do two things with this information. First, you can pull out the brand mentions that are complaints and work to resolve the situation for a customer service boost. Second, you can find positive brand mentions and message the site owners to turn those mentions into explicit links.

Scraping Data to Prioritize Link Building Effort

This is a built-in feature for some of the tools in the first section, but you can do it on your own with API access to some tools like Moz or Majestic. Compile a list of sites you can submit your link to, and run that list through those APIs to scrape data about them. Sort by the metrics you care about for backlink quality, and prioritize the best sites.

Finding Hosts of Competing Content

If your competitors are engaged in scraping-powered backlink campaigns, they might be using more innovative strategies than you are, or targeting keywords you didn’t think to target. You can scrape data about their links or their name for contributions, and identify sites that accept guest posts for backlinks. Use them yourself.

Harvesting Whois and Stalking Site Owners

Whois data gives you a lot of information you can use, ranging from infographic fodder about domain registrars of top sites, to contact information you can use for personal outreach. Be sparing with the personal stuff; a lot of people don’t like an internet detective.

Read more: Using Proxies to Scrape Whois Domain Data.

More…Gray Hat SEO technique, Be careful when using those technique!

Leaving Blog Comments Automatically

Blog comment spam is a huge problem and has forced many blogs to run anti-spam programs or turn off their comments entirely. That said, if you’re careful, you can still leave relevant and valuable comments and get backlinks because of them. The key is to use your scraping to identify relevant blogs with open comments sections, but to create and submit your comments manually. That, and avoid comments that look like spam.

Making Automatic Forum Posts

Forums are relatively low value these days, so they aren’t that great as a white hat technique. However, you can often find relevant but abandoned forums with open guest registrations and use them to submit automatic posts. As long as the forum is visible to the public, those links will be indexed, and you can earn a slightly higher search ranking for posting them. Just try to target forums that have recent activity; hitting up forums that haven’t seen a post since 2010 is a sure sign of spam.

Scraping New Niche Content to Make Top Lists

Run a regular scraping campaign each week or each month, looking for new content in your niche. That means setting searches with keywords and a “past week” time filter. You can then have data for a robust and varied “best content of the week” top list series, giving you great backlink opportunities form every site you feature. For bonus points, scrape contact information so you can notify sites when you feature them.

Submitting Content for Syndication

Content syndication is risky for some sites because, if it’s done poorly, it can result in duplicate content penalties. If you’re willing to risk it, or you’re submitting content with a link but which isn’t attached to your name, you can use bots and scrapers to find syndication sites to submit your own content to. This can be very good for links, because many sites that syndicate content have higher rankings than the sites that submit that content.

Scraping Data to Create Compelling Content

This is a more traditional use of data scraping, and it’s what Google thinks of when they put rate limits on their search. For example, maybe you want to do an infographic on the “top X best” titles of blog posts, looking at the distribution of what number X is. You can scrape that data using a tool and proxies to keep from getting rate-limited and throwing off your harvest.

You may be like,

- Proxies for Preventing Bans and Captchas When Scraping Google

- The Ultimate Guide to Scraping Craigslist Data with Software

- How to Scrape Data from Linkedin Using Proxies

At Last, When all else fails and you find you used some of these strategies in a way that got you penalized, you can use some of the same tools to compile a list of bad links to disavow. This will help you get rid of link-based penalties and tell Google hey, you’re totally on the up and up, it wasn’t your fault.