Craigslist is a notoriously difficult site to use for data harvesting, because of how they have everything set up. There’s no easy way to scrape data, at all.

On most commerce, database, and social sites, the developers provide an API for power users to scrape data and output it in a format they want. For example, look at how much documentation Facebook has for its API.

You can pull practically any Insights data from a page you own, and you can pull a bunch of public data from pages you don’t own. It’s all surprisingly simple, even.

Craigslist is a special case. They have an API, but it functions in reverse. Facebook’s API allows you to pull data, but does not allow posting. You need to use apps for that functionality. The Craigslist API allows you to post, in bulk if you want, but it doesn’t allow you to pull read-only data.

It’s quite a backward implementation, but it makes a certain amount of sense from the Craigslist point of view.

They gain a benefit from allowing businesses, particularly real estate managers with large numbers of properties, to post in bulk via a simple API. On the other hand, they gain nothing by allowing third parties to scrape data and, presumably, display it on a non-Craigslist site.

Even if all you want to do is run some data analysis, it’s just that much more stress on their servers for which they gain nothing.

Craigslist does have RSS feeds you can subscribe to in various subsections and regions of the site. These are available for personal use, but if you try to use them to harvest data in bulk and use that data elsewhere, you’re likely to have your access blocked. Craigslist even says in their terms of service, flat out:

- You agree not to use or provide software (except for general purpose web browsers and email clients, or software expressly licensed by us) or services that interact or interoperate with CL, e.g. for downloading, uploading, posting, flagging, emailing, search, or mobile use. Robots, spiders, scripts, scrapers, crawlers, etc. are prohibited, as are misleading, unsolicited, unlawful, and/or spam postings/email. You agree not to collect users’ personal and/or contact information (“PI”).

What does this all mean? It’s pretty simple to break down.

- You can only access Craigslist via a web browser or email client.

- You can only post to Craigslist using a web browser or their bulk posting API.

- You cannot scrape data with a spider, crawler, script, or bot of any sort.

- You cannot harvest user personal data or contact information.

Additionally, of course, there are the basic anti-spam measures as well. In short, the entire focus of this article – scraping Craigslist data using third party software – is against the CL terms of use.

Scraping Legality when craigslist scraping

Why do I bring this up? Two reasons, primarily. One is obvious enough; we’re a site that mainly provides guide and review to proxies, and proxies are essential to this process. The other is a basic warning.

Anything you do, while following these instructions, is on you. You now know, going into it, that it’s against the terms of use for the site. You are thus liable for anything that happens, ranging from having your access blocked, your posts removed, or your IP banned. You could potentially even be subject to legal action.

-

Is Scraping data from Craigslist Legal?

Craigslist has, in the past, even taken that legal action. It all depends on the scale of your scraping, of course, and the usage of the data you harvest. Data analysis is more or less fine. Commercial use, particularly commercial use that steps on CL’s territory, will enrage the beast.

The most notable instance of this was the recently-settled legal fight between Craigslist and the 3Taps API creator, itself named 3Taps.

Essentially, 3Taps created a Craigslist data harvesting API. They partnered with Padmapper, a company that used the real estate data harvested from Craigslist and overlaid it on a map. This produced a real estate availability map, which is honestly a very useful function, and it’s amazing that Craigslist hasn’t made something of the sort on their own. That’s for the next section, though.

Craigslist obviously didn’t approve of having the data from their site used against their terms of service on a third party site. They started a legal suit against both 3Taps and Padmapper, which began as early as June of 2012, and was only just settled in June of 2015. Both sites were required to stop harvesting data, and 3Taps paid Craigslist a tidy million dollars.

While 3Taps and Padmapper both still exist using data from non-Craigslist sites, the settlement hurt, and it’s just one example of what could happen if you try to scrape CL data and use it in commercial use.

The primary mistake these businesses made was ignoring when CL sent out a cease and desist letter and banned their IPs. They continued to circumvent those restrictions and scraped data, which in turn led to further legal action. My recommendation? If you get a C&D letter, comply. It’s probably not worth it to you.

Issues With Craigslist

Craigslist is a site with a lot of issues. It was debuted in 2006, but how much has it changed since then? They have had a few major updates over the years, but just compare the current design to an Internet Archive of the site from its launch. It’s hardly changed at all. It’s centered rather than left-aligned, it has it better coloring and spacing, but it’s largely identical.

The user interface hasn’t changed much, but it has obscured more data than it used to. These days, you see three types of ads posted.

- Ads with plaintext contact information. These are usually posted by businesses looking to get people to contact them. These businesses have staff to answer the phones, and thus weed out unsavory callers.

- Ads with obfuscated contact information. These are the people who post personal ads and post their phone numbers with a format like (five…5,,,5) 1two….three-four56’’’’7. They do this so a human can, with a bit of difficulty, parse the phone number, but a bot finds it impossible.

- Ads with no contact information. If you want to contact the poster of the ad, you need to send an email to the anonymized email address provided by Craigslist as a forwarding address. You see nothing of the poster, but they see your return address and are free to respond in kind.

Beyond that, there are issues with what is and isn’t allowed on CL these days. Post titles are free to include all sorts of Unicode symbols, and in fact, it almost makes it more effective to do so than to not, because normal text headlines don’t stand out. This also presents a problem to scrapers, which need to figure out how to parse these special characters or remove them altogether.

And, of course, there’s the ongoing problem of spam. This isn’t so much a problem in more “serious” sections, like the real estate section, that are somewhat heavily moderated. Rather, they’re a problem in more personal sections, like Free, Jobs, and the entire Personals category.

Oh, CL does have anti-spam measures. Sometimes they require phone verification. They have a posting limit, excepting the bulk post API, which only works in certain sections. They have an automated system to lock out people who break the rules. None of it works.

The worst part is, Craigslist was making moves to improve the flexibility and viability of the site, a few years ago. You could use a lot of HTML to customize your postings, to make the thin site itself look more robust and to provide more information in better ways. In 2013, Craigslist removed these features, returning the site to its basic black and white look. They called it Hurricane Craig, because web monitors and marketers are nothing if not overdramatic.

There’s only one benefit to Hurricane Craig, and that’s the fact that it standardized a lot more of the data in posts. It makes it much easier for a robot to pull data from a browser window, rather than needing to find and parse data in code based on certain criteria. So, good for you, Craigslist; you made it easier for us to do what you don’t want.

Why You Might Scrape Craigslist

What possible reason could you have to scrape Craigslist data? Well, there are a lot of different reasons.

On the analytical front

You could always just want to harvest data to write a report. Investigative journalism still exists, rare as it may be these days. You might want to scrape all of the posts in a given section and analyze things about them, like average prices for products, or frequency of posting, or comparing the type of item with how hard it is to contact the user. None of this is profitable, of course; it’s just information for you to use in other ways. Honestly, I think Craigslist would be fine with this, and I think you’d be safe doing it, because they wouldn’t win a court case over it. Of course, I’m not a lawyer, so take that with a chunk of salt.

On the personal front

You could harvest data for the information you want to use. If you’re shopping for used cars, for example, you might want to harvest all of the data on used cars to correlate prices, locations, and make/model information about the vehicles so you have once central location to browse through. As useful as Craigslist can be, their browsing and filtering kind of sucks.

On the profitable front

You can scrape data for something you would like to buy and resell. One common target is concert and event tickets; you can monitor events that are sold out, scrape Craigslist to locate tickets for those events being sold, buy up any below a certain price point, and resell them for more elsewhere, like eBay. This does, of course, rely on a lot of personal effort, but hey, some people will do a lot to make a few bucks.

On the commercial front

you can use it to generate leads. You could scrape the Wanted section for anyone who is searching for a service or item you provide, and then reach out to them to sell your product. It’s probably not a very efficient means of generating leads – possibly no more effective than posting a selling ad in the first place – but it’s there.

Of course, all of this relies on your willingness to violate the Craigslist terms of service. I highly recommend avoiding any overt commercial usages. Going the route of Padmapper opens you up to all the same possible legal damages, and there’s already a legal precedent for the arguments that can and cannot be successful.

A step-by-step guide to Scraping Data from Craigslist

The exact method you use for scraping data will, unfortunately, depend a lot on the tool you decide to use. The general process will look something like this.

Step 1: Pick a Tool

The first step is to pick a scraping tool you would like to use to scrape Craigslist. You can, if you want, develop one yourself. It’s an interesting exercise if you’re a coder. If you’re not, well, there’s no reason to bother making one when so many different tools already exist. Here’s a rundown of a few options, though they are by no means all the options available.

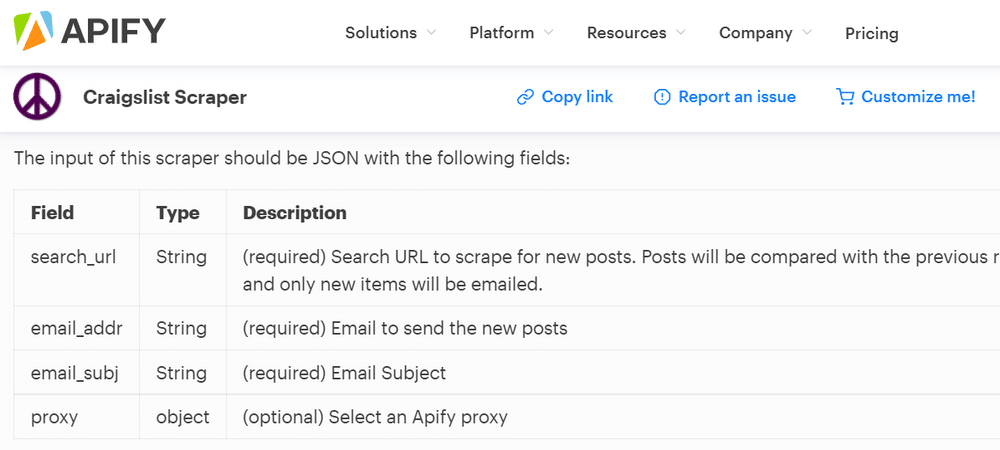

Apify Craigslist Scraper

Apify is a web scraping platform that includes hundreds of ready-made tools for scraping popular sites. The Apify Craigslist Scraper is free and easy to use and lets you scrape for posts based on any search criteria.

The scraper will extract and download the images, prices, date posted, and the URL of the posts. You can schedule the crawler to run as often as you like, and it will even send you an email alert whenever new posts are found. You can use the built-in Apify proxy service with the scraper, so you don't even need to worry about setting up proxies.

Cloud Crawler

This crawler is a web spider working specifically in the cloud, which makes step 2 a little unnecessary. It is, however, quite difficult to use.

There’s not much documentation for it. It’s good if you want to experiment with coding but don’t want to develop a scraper from scratch. On the plus side, it’s a free open source project.

Visual Web Ripper

Where Cloud Crawler is coding raw HTML in a notepad txt file, Visual Web Ripper is Dreamweaver. It’s a very user-friendly, graphical web ripper that allows you to point at the information you want to scrape, and the program does the rest.

It has video demonstrations, it has a fancy website and everything. It does have limitations, however. The free trial only scrapes up to 100 elements on a website, which can be bogged down by scripts and code. It’s also only available for fifteen days. It is, however, very expensive. The license for the full version of the program – including lifetime upgrades – is $350.

Python Craigslist Scraper

This is another open-source code scraper, but it’s a little easier to use. Free, as with anything on Github, it’s coded in one of the easiest languages to learn. It’s possibly the most popular free CL scraper out there.

https://www.youtube.com/watch?v=4o2Eas2WqAQ

To scrape Craigslist posts using Python and Selenium in a professional manner, you should follow these steps:

- Install the Selenium Python library and the appropriate web driver for your web browser.

- Import the Selenium library and create a new Selenium

WebDriverobject. - Use the

get()method of theWebDriverobject to open the Craigslist post page in your web browser. - Use the

find_element_by_xpath()method of theWebDriverobject to select the specific elements on the page that contain the data you want to scrape. For example, if you want to scrape the post title, you can use the following code:

title = driver.find_element_by_xpath("//span[@class='postingtitletext']/span[@id='titletextonly']")- Extract the data from the selected elements using the appropriate methods, such as

textorget_attribute(). For example, if you want to extract the post title, you can use the following code:

title = title.text- Use the

tryandexceptstatements in your code to handle any errors that may occur while scraping the Craigslist post. For example, if the element you are trying to scrape is not found on the page, your code should gracefully handle the error and continue scraping other data. - Use the

time.sleep()function in your code to introduce delays between HTTP requests. This can help to prevent your IP address from being blocked by Craigslist for excessive scraping. - Save the scraped data to a file or database for future use.

Following these steps can help you to scrape Craigslist posts using Python and Selenium in a professional and efficient manner.

Scrapy

This is, in my opinion, one of the most useful, robust, and legitimate scrapers out there. It’s billed as an all-purpose web crawler, so you can use it for a lot more than just Craigslist.

It’s also much less limited, it’s easy to configure, and it’s free. Really, I just saved the best for last. The best part about Scrapy is documentation. For example, if you want to scrape Craigslist, you can follow this tutorial which was built around scraping nonprofit jobs in a specific area. It may look a little intimidating, but it’s really not that bad.

- Related, Why you need proxies for Scrapy?

Step 2: Use Proxies Whenever Possible

How to avoid triggering captcha craigslist scraping & How to avoid your IP get automatically blocked?

Remember how I mentioned Craigslist is pretty aggressive about stopping scrapers? Proxies are a solution. Their only way to identify a scraper is to notice that the same IP address is accessing page after page, very quickly.

They can’t even tell what that user is doing; it could just be browsing, like Google’s crawlers. I’m sure they have whitelisted Google, but they won’t whitelist you.

Proxies work by funneling traffic through a rotating selection of web servers, filtering the origin point from the website. Craigslist would, instead of seeing one IP visit a hundred pages in a row, would see 20 different IPs visiting 5 pages each. That’s a much more reasonable number, and it’s not going to get you restricted.

Picking the Best Craigslist Proxies for Classified ADs Posting & Scraping

Granted, you need to work out how to filter your scraper through a proxy. Scrapy has some documentation about it, but it’s up to you to vet the code and get it to work with your configuration.

Step 3: Harvest and Collate Data

Once you have your scraper set up and your data ready to be collected, just run it and collect the data. Chances are, it will be output into a CSV file, which can be opened in any spreadsheet program, like Excel or Google Sheets.

Go through the data and do with it as you will! I’ll caution you again not to make a public commercial use out of it.

Craigslist is much more likely to send the C&D lawyers after you if you do. Personal use is a lot safer; the worst they can do is block your IP, which won’t matter if you’re using a proxy.