GSA Search Engine Ranker is a powerful tool when used appropriately. When used poorly, it can come back to bite you in the ass, hard.

GSA Search Engine Ranker is a powerful tool when used appropriately. When used poorly, it can come back to bite you in the ass, hard. Unfortunately, it’s incredibly easy to use poorly, particularly if you’re not used to the relatively arcane program and its features.

GSA SER works on a simple premise. One of the most potent of all forms of SEO is the backlink. In order to gain a powerful boost to your SEO, you need a number of valid, useful backlinks. The more the better (generally speaking).

Building these backlinks is a long and time-consuming process that partially involves luck and persistence. It’s also where many young webmasters make their first dangerous mistakes, falling victim to thin schemes on Fiverr or a black hat web forum. They end up with a ton of spam backlinks, which hurt their site overall.

Ideally, you would find a way to automate this process, without risking black hat penalties or linkspam flags. GSA SER is a tool that does just that. It’s essentially an automated scraper and link submission tool, though using it specifically for website scraping is a terrible idea. There are better scrapers, specifically scrapers that have additional useful features.

Most similar pieces of software require you to build a manual list of sites to submit, but GSA allows you to build one through the program. More importantly, that database is dynamic and ever-changing. When a site dies or is delisted, making it useless to you, it’s removed from the list. When a scan shows a new site that’s a ripe target for a link, it’s added to the list. You can even rank sites in different value tiers, so you can put your careful and valuable links on the high tier sites.

Proxies Matter

Here’s the thing about GSA Search Engine Ranker, and why it’s risky for inexperienced users; it throws links and content on a wide variety of sites, very quickly. If you’re not using a content spinner – bundled with the software – and a list of proxies, it looks like hundreds or thousands of posts with similar or duplicate content are all coming from the same user. This brings up a number of problems.

First, the lack of content spin means you immediately cause duplicate content penalties, all of which point back to your site. This is an immediate Google penalty, because Google hates duplicate content.

Second, when all of your submissions come from the same IP, it’s easy for that IP to be banned. This temporarily kills the utility of GSA SER until you’re able to use a different IP, and causes the same problem or Captchas issues. This is where you need proxies.

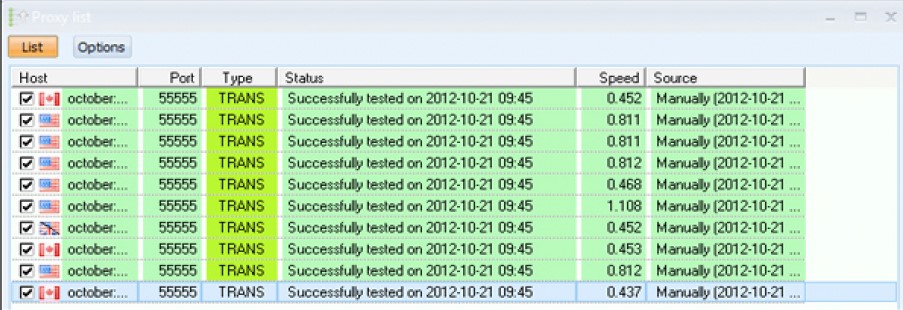

With a proxy list, GSA is able to post these automated links from a wide range of different geographic locations. Of course, it’s all originating from your computer, but no one but you knows that.

Proxy Quality

However, you can’t use just any old proxy list. Proxies are notoriously fickle, particularly free proxies. For one thing, they’re prone to dying or drying up without warning. They’re also prone to high latency, when a lot of people are using the same public proxies at the same time.

Read more, Are Paid Proxies Really That Much Better Than Free Proxies?

Some public proxies also impose advertising or layovers on your content, which can hinder the operation of a tool like GSA.

You also have the issue of public proxy lists ending up on-site blacklists. A proxy IP does you no good if it can’t post to the sites you want to post to, according to your scraped list.

This is why you need a private proxy network. You need a large number of proxies, all of which are low-latency, do not impose interstitials or overlays, and are guaranteed to work for submission. When you pay for a proxy list, you’re also paying for upkeep; if a proxy dies, a new one will take its place. This allows you to maintain the efficiency of GSA without issues.

Here’s a good tutorial for configuring a proxy list in GSA, though you do need to actually purchase access to a private proxy list if you want to benefit from it.

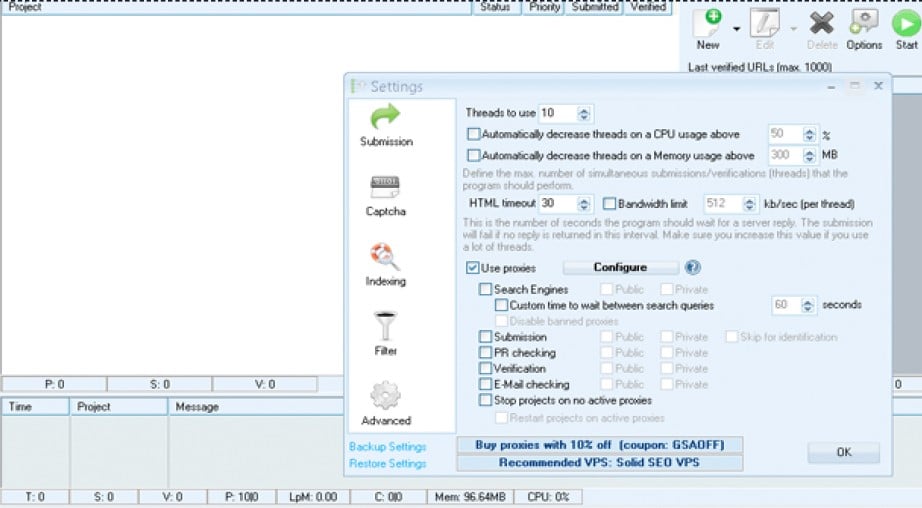

There’s one other thing to consider with your proxies; you need enough on your list to handle the volume of links you’re trying to generate. This is where threading comes in. GSA SER allows you to set up individual processes running in threads on each proxy, automatically of course.

Essentially, this allows you to divide your link creation among a variety of proxies. If you want to create 100 links, and you only have one proxy, 100 links must be made through one IP; this is bad. If you want to make 100 links, and have 50 proxies, you only need to make two links per proxy. This is much better. You don’t need to go all the way down to one link per proxy; this wastes a lot of time on your proxies.

An ideal thread ratio is somewhere around two or three. That is, two or three links created per proxy.

Related,

A Note on Penguin

Using GSA improperly can and will penalize your site dramatically via the Penguin algorithm.

The last thing you want is to fall victim to such a penalty; it can essentially kill your site for months until you disavow or remove all of the links you made with GSA. You need to be very careful to make your links look natural. This means:

- You need to set up partial match keywords, so you’re not spamming the same anchor text everywhere.

- You need to set up variations on anchor text and keyword usage so no two links look the same out of a given handful. Occasionally some duplicates are fine, but the more dupes you have, the worse off you are.

- You need to vary up your content, typically via spinning. This will require some manual labor, though you can pay a freelance writer to create your spun content.

You should also try to avoid putting your links on sites that are way out of your niche, or sites that are clearly spam. The worse a site is, the less valuable a link is for you.