Are you planning on paying for a subscription for Zyte proxy formerly Crawlera? Before doing, I will advise you to come in now and read our review to know both the pros and cons of using the tool to evade blocks.

Some web servers are just so smart at detecting bot behaviors and blocking them. I have had to work with one of such problematic sites that no matter how random I switch and rotate proxies, get my bot to sleep between requests, and prevent detection; I got sniffed out as a bot after a few requests and start facing Captcha and IP ban. To cut the story short, I was able to scrape that site with minimal hassle when I switch to using Scrapinghub’s Crawlera.

What is Zyte proxy (formerly Crawlera)?

Crawlera is a scraping or proxy API that routes your web requests through their proxies and helps you avoid IP ban. They make use of different techniques such as IP rotation and preventing the occurrence of Captcha.

Official, Crawlera.io

When using Crawlera, you do not have to think of anti-bot systems of websites as Crawlera will take care of evading them on your behalf. You just have to send a simple request and the page will be returned to you – and if not, you do not have to pay for failed requests.

But things are not all rosy with Crawlera; there are aspects of Crawlera that aren’t so good and need improvements. These, together with the good aspects of Crawlera, will be discussed in this review article.

Zyte Proxy (Crawlera) Pros

A good number of Internet marketers and researchers have applauded Crawlera as it has helped them scrape data from websites effortlessly. This section of the article will be used to discuss the good aspects of Crawlera.

Excellent Success Rate

If there’s one reason I like Crawlera when I first used it for scraping the problematic website I talked about earlier, that has got to be the success rate for the requests I sent to the site. Crawlera is undeniably one of the best when it comes to helping developers avoid blocks in other to access the data they are interested in. One of the things that make this possible is their proxy management system. Crawlera has its own pool of residential IP Addresses that it routes requests through. It rotates them randomly but intelligently to prevent detection.

Aside from its rotating proxy network, Crawlera has a request throttling algorithm and a proprietary ban detection system. These, together with hundreds of heuristics, ensures that requests through it are successful. The truth is, Crawlera is battle-tested, used by both small businesses as well as their big counterparts, and as such, they have the needed data to make changes that will make their system undetectable to websites.

Good Pricing Model

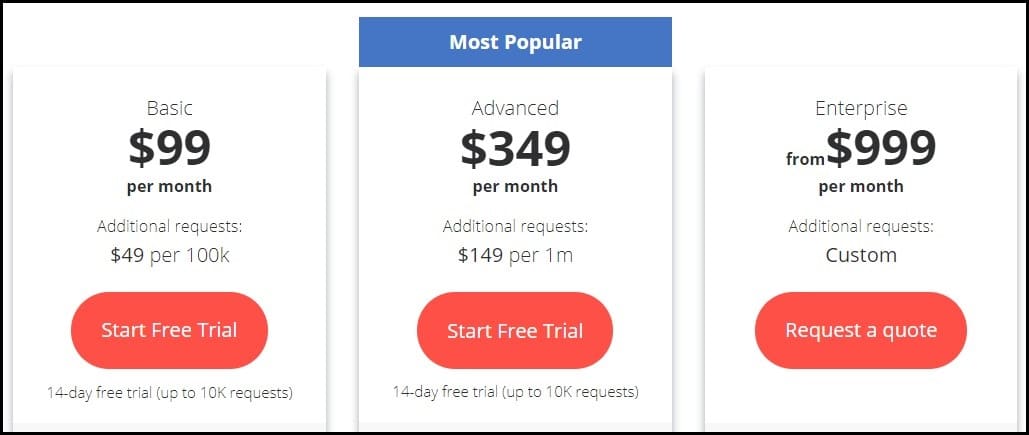

Some users might see a problem with the Crawlera pricing model, but if you ask me, I will tell you it is perfect – and you should be happy it is like that. Crawlera is priced based on a number of requests.

Each of the plans has a maximum number of requests you can send within a month. When you send requests, and they weren’t successful, be assured that such unsuccessful or failed requests won’t be counted as part of the requests you sent. However, because it has a high success rate, you do not have to worry about failed requests as it occurs at a minimal rate.

The above image shows the pricing of Crawlera. You can see that it caters to all business types ranging from small, medium to big businesses. With $99, you are allowed to send 200,000 requests within 30 days in their Starter plan. For this plan, you are allowed to create 50 concurrent threads, and each will run successfully without one affecting the performance of another. Because their plans are based on a number of requests, the bandwidth you can consume is unlimited – in as much as it remains within the number of requests allocated to you.

Easy to Use

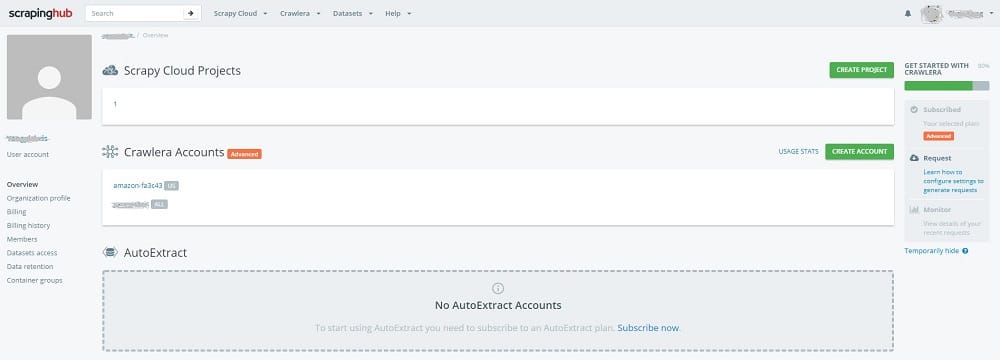

One of the reasons why users of Crawlera like it is that it is easy to use. Yes, using some developer-focused software can be a pain in the neck for beginners because you have to dig deep through documentation, and implementation details for you to be able to use them. Crawlera is not like that; it is simple to use. You just need to use it as you use proxies in your bot.

There’s an implementation guide for popular programming languages. If you log into your dashboard, there’s a page there that is dedicated to showing you how to use it, with sample code for the popular programming languages such as Python, PHP, Ruby, Node.js, Java, C#. It integrates nicely with Scrapy.

Excellent Free Trial and Refund Options

I must confess, the entire proxy and web scraping industry is known for offering free trials. However, what is provided by Crawlera is quite different. Unlike other providers, Scrapinghub provides first-time customers with 10,000 requests to be used within 14 days.

You’ll agree with me that this is even enough for some small scale scraping work. The features attached to the free trial is the same as the features attached to their Starter plan – you are limited to 50 concurrent threads and with other limitations.

They also have a refund option that is not publicly available on their homepage or on their user’s dashboard. While I never requested for it, I have seen threads on their forum where users cancel their subscriptions and ask for a refund, and the refund request is granted in full.

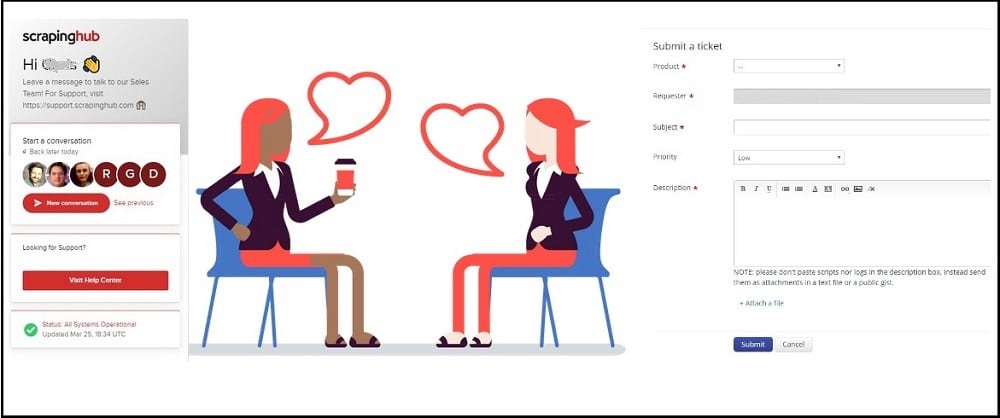

Good Customer Service

The customer service of Scrapinghub is responsible for providing customer service to Crawlera users, and they are doing a great job. They have live chat support that you can use to communicate with them and help sort out the issue you’re having with their software. If they aren’t online, you can drop your message, and you will surely get a reply immediately a representative comes on line. From the few tests I carried out, this should take only a few hours.

Zyte Proxy (Crawlera) Cons

Despite all the above, Crawlera still has aspects that need to be improved and worked on. Some of these are discussed below.

Blocks are Experienced Sometimes

I have seen users that asked for a refund solely because they were experiencing blocks. Crawlera knows all the HTTP error codes and has its way of treating them. Usually, when it requests fails, it keeps trying until a certain number of trials, after which it gives up. While this can be seen as inevitable, considering how differently different sites react to bot traffic, Scrapinghub should be able to find ways to make Crawlera smart enough. Blocks are usually experienced when a site is fairly large. My surest guess is that the number of proxies they have is not many enough for some projects.

Does Not Solve Captcha

According to the information on the Scrapinghub FAQs page, Crawlera does not solve Captcha. What they do is avoid Captcha from occurring. But when they occur, they keep sending the same request through different IPs until the Captcha disappears.

But when it doesn’t, it becomes a failed request. Even if the request does not fail, it could get some of the responses to be slow. Solving Captcha is something Crawlera can implement because we have some Captcha solving software they can utilize – they can also create their own.

Do I Recommend Zyte Proxy (Crawlera)?

As I stated earlier, I have used Crawlera, and it has worked for me. I have also read accounts of those that Crawlera did not work in their own use case. However, the success rate is quite higher than the failure rate, and as such, I recommend it. If you have any doubts about whether it will work for your project or not, I will advise you to test it by claiming their 10,000 requests for 14 days of free trials.

Our Expert's Review

-

Scaping Performance - 9.5/10

9.5/10

-

Proxy Network - 9/10

9/10

-

Proxy Functions - 8.6/10

8.6/10

-

Customer support - 9.6/10

9.6/10

Read other user reviews

Zyte smart proxy alternatives

- ScraperAPI: Scraping API to handle IP rotation, Headless browsers, and CAPTCHAs

- Smartproxy: Traditional residential proxies provider, Support Unlimited Connections

- ProxyRack: Cheap proxies solution for web scraping, Upto 1000 simultaneous threads

- Luminati: Largest residential proxy network with the lowest fail rate, as the best Crawlera alternative!