Do you consider crawling and scraping as the same and use the words interchangeably? It might interest you to note that they are different. Come in now to discover the difference and similarity between then.

Two of the most confusing words in the industry today are crawling and scraping. If you read a lot about machine learning and data aggregation, you must have come across the two being used interchangeably. To many, they are the same, and one word is synonymous with the other. But are they the same? What differentiates them, and how similar are they? In this article, you will be learning about the difference and similarities between web crawling and web scraping.

I must confess; I have used the two words interchangeably in some of my articles. This is because there’s a bit of crawling in some web scraping tasks, and scraping is an integral part of the crawling process. However, when you are to go deep into what each entails, and the final expectation, you will discover that they are different. In discussing “crawling VS scraping”, let start by discussing the differences between them then end the article by discussing their similarities.

Differences Between Crawling and Scraping

Crawling and scraping seem to be the same. However, after going through the differences that exist between them, you will discover they are not the same. Some of these differences are discussed below.

Definition

-

Web Scraping

Web Scraping is the process of extracting specific data from web pages. It involves the process of sending a web request and getting a web page returned as a response, then parsing it to extract the required data while every other content is left. The tools used for web scraping are known as web scrapers. Web scraping is highly specialized and has specific data on a page it is interested in scraping. In most cases, when engaging in a web scraping project, you have a list of the web pages in the form of URLs beforehand and have a knowledge of the HTML and how the web pages have been coded.

While some web scrapers use Artificial Intelligence and Machine Learning to detect specific data, most web scrapers are site-specific, and the HTML of the pages must have been inspected and the web scraper coded with respect to the inspected HTML. When the HTML changes, the code breaks and would need a fix to continue working. Examples of where web scraping is useful to include extracting stock prices, weather data, contact details, and any other user-generated content.

-

Web Crawling

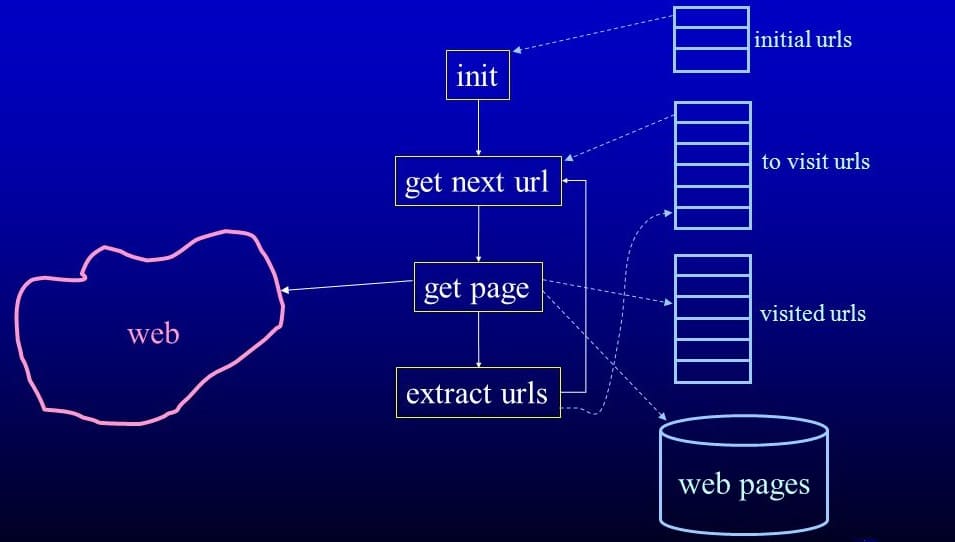

Web crawling, on the other hand, takes a more generalized approach, visiting web pages and keeping records of what’s on them and then extracting the links on the page that meets specific criteria to add to the list of links to be crawled. Web crawling is done using computer programs known as web crawlers or web spiders. Unlike in the case of web scrapers that have specific URLs in mind and have been designed based on the HTML of a page, web crawlers only have seed URLs, and it is expected to find new links it will crawl on its own. Because of this, web crawlers are not site-specific and do not need to have prior knowledge of a web page before crawling.

It, however, usually does not extract specific data as web scrapers do. In the true sense of the word, web crawling involves web scraping as links have to be extracted. The most popular examples of web crawlers are the bots of search engines such as Google and Bing that visit pages to index them and then follow links on those pages in other to crawl them too.

The scale of Data Extraction and Technological Engineering

-

Web Scraping

If you have been interested in web automation before, you will discover that web scraping is the first lesson you will be thought. You know why? Because it is incredibly easy, especially if you are dealing with a site that’s not strict in terms of preventing scraping. Web scraping can be done at any scale – both small and big. The engineering aspect, including database and its management, handling proxies, and Captchas, as well as handling JavaScript, can be incredibly difficult and, at the same time, easy – it all depends on the website you are scraping from and the amount of data to be scraped.

-

Web Crawling

Web crawling is done mostly at a large scale, and the engineering is incredibly difficult. Take, for instance, if you are developing a phone number extracting web crawler that goes from websites to websites crawling phone numbers of people from different countries and regions, you have to take into consideration, the different formats used in different countries and some of the tricks people use to disguise their phone numbers in other to get crawlers to skip them.

When you even consider web crawlers meant for search engine indexing, you will know that web crawling is a serious business. It requires a great deal of engineering and efficient database management system – this is not the case of web scraping that CSV and Excel files are mostly used.

Read more, Building a Web Crawler Using Selenium and Proxies

Ethical Perspective

-

Web Scraping

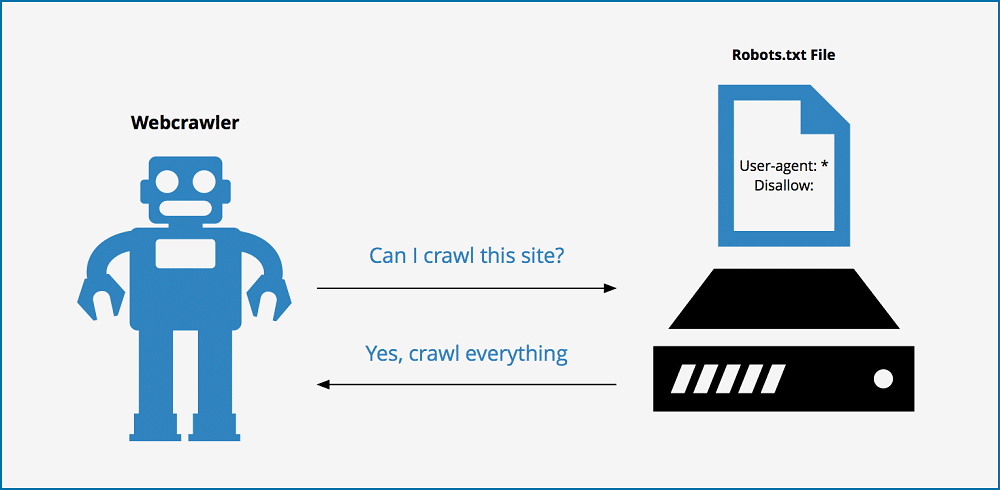

Hardly would you see a website that knows what it is doing allow web scrapers to access their web pages – you can check this in a website’s robots.txt file. Web scrapers add no value to a website. Instead, they are notorious for extracting publicly available data on websites free of charge while hammering them with numerous requests. There are even instances where web scrapers crash websites due to the number of requests they send in a short period of time. Even if they do not affect the performance of a website, they surely will add to the running cost (financially) of websites they access. Worse still, there’s hardly any web scraper that respects the robots.txt files of websites.

Read more, How to Scrape a Website and Never Get Blacklisted?

-

Web Crawling

Unlike in the case of web scrapers that do not recognize and follow the directives in a robots.txt, ethical web crawlers do. In fact, many web crawlers, such as the ones owned by search engines, recognize and respect the directives in a robots.txt. Very important is the fact that web crawlers such as the ones owned by search engines add value to a website as they are meant for crawling in other to index pages.

However, this does not, in any way, claim that all web crawlers are ethical. There are web crawlers such as the ones meant for scraping contact details and other unethical crawlers that do not consider the directives in robots.txt files. However, when compared with web scrapers, web crawlers respect robots.txt files more.

Similarities Between Crawling and Scraping

From the beginning of the article, it was stated that crawling and scraping are seen as the same. But from the differences discussed above, you can see that they are not. However, they share some similarities in common that you need to also know. Some of these are discussed below.

They Automate Data Extraction

Both scraping and crawling are automated processes and are done using computer bots or better still web bots. They are all meant for visiting web pages and extracting publicly available data from them. However, while web scrapers need to have prior knowledge of the websites it will scrape from beforehand, crawlers do not. But all in all, they automate the archaic process of manually collecting data from websites. The truth even remains that for you to do web crawling, you need to web scrape. Web crawling is a specialized form of web scraping.

- Web Scraping API to Help Scrape & Extract Data

- Proxies for Travel Fare Aggregation

- Processes Involved in Data Aggregation

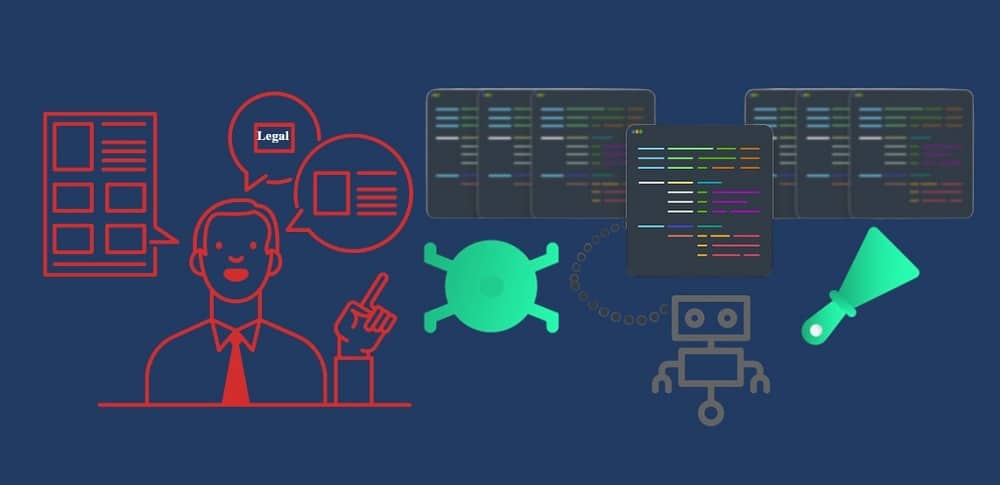

Legalities Involved

It might interest you to know that most websites on the Internet prohibit the use of any form of automation software on their web pages, excluding the popular search engines. For those that allow, they provide their official API – and web scrapers and crawlers do not use APIs. This then means that whether you are developing a scraper or a crawler, you are directly going against the terms of usage of your target websites. However, this does not make it illegal. In fact, both scraping and crawling publicly available data on websites are completely legal. However, technicalities can make it illegal.

Conclusion

Without looking deep into the activities involved in web scraping and crawling, you will think that they are the same but given different names. Some even use the word interchangeably to mean the same term. However, if you had read all of the discussions above, you will agree with me that though they seem to be the same thing and have some similarities, they are not the same – and do have some undeniable and very important differences.